ASTrA - Adversarial Self-supervised Learning with Learnable Attack Strategy

International Conference on Learning Representations 2025

Chhipa, P.C.*, Vashishtha, G.*, Settur, J.*, Saini, R., Shah, M., Liwicki, M. ASTrA: Adversarial Self-Supervised Training with Adaptive Attacks. International Conference on Learning Representations. (ICLR 2025)

Prakash Chandra Chhipa, Gautam Vashishtha, and Jithamanyu Settur - equal contribution.

Read Paper Source Code @ Github Check Poster Watch Video @ Youtube

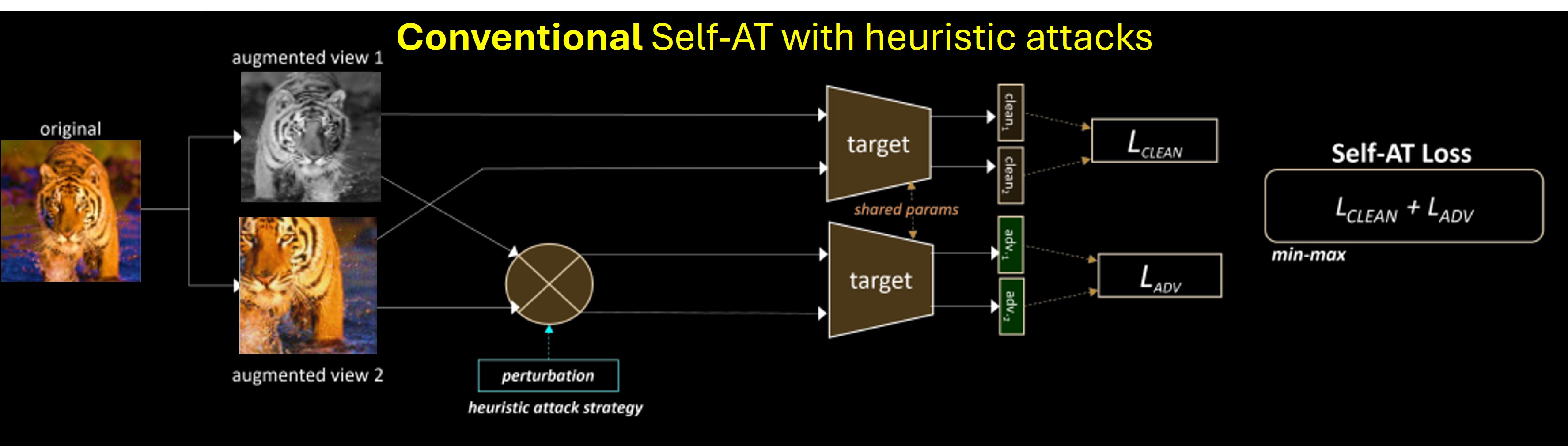

Adversarial Training (AT) emerges as the most prominent defense against adversarial attacks in supervised learning. Inspired by progress in self-supervised learning in recent years, self-supervised adversarial training have positively attempted to leverage unlabelled data for achieving adversarial robustness (self-AT). However exisitng conventional self-supervised adversarial training (Self-AT) methods rely on heuristic attacks, which are static and fail to adapt to varying distributions and models.

Challenges in Conventional Self-AT:

- Heuristic attacks are static and fail to adapt to the evolving dynamics of SSL models.

- Adversarial robustness relies on manually tuned attack parameters, making it impractical for real-world scenarios.

- Lack of optimization for attack strategies leads to suboptimal representations.

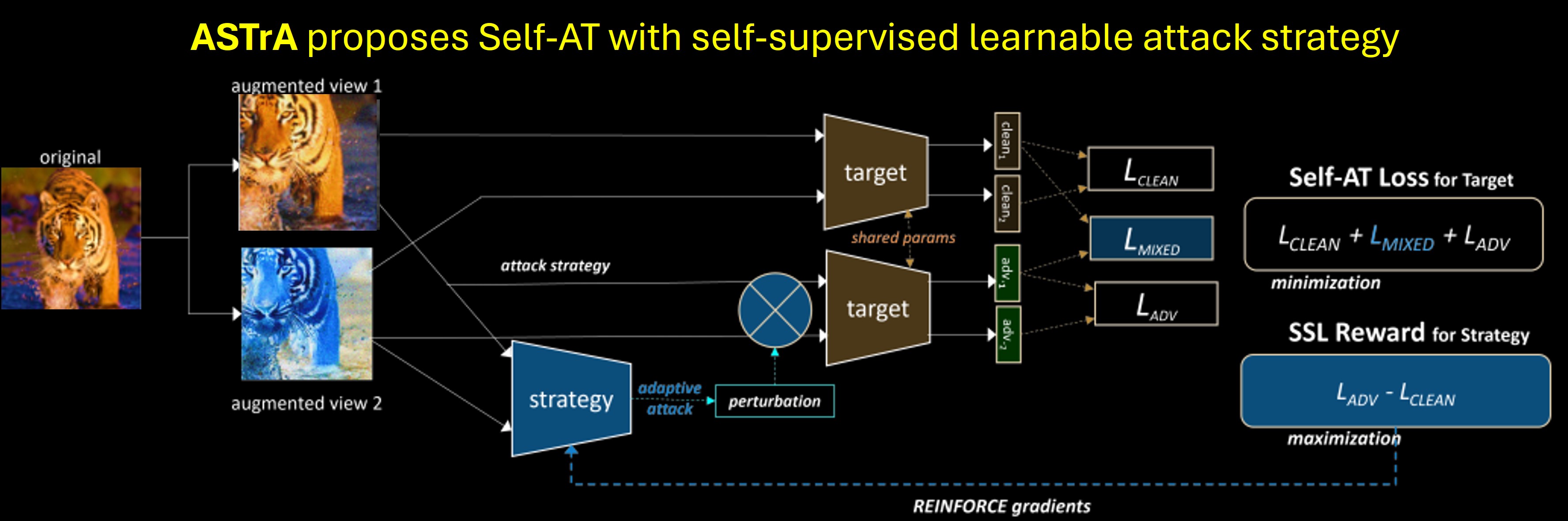

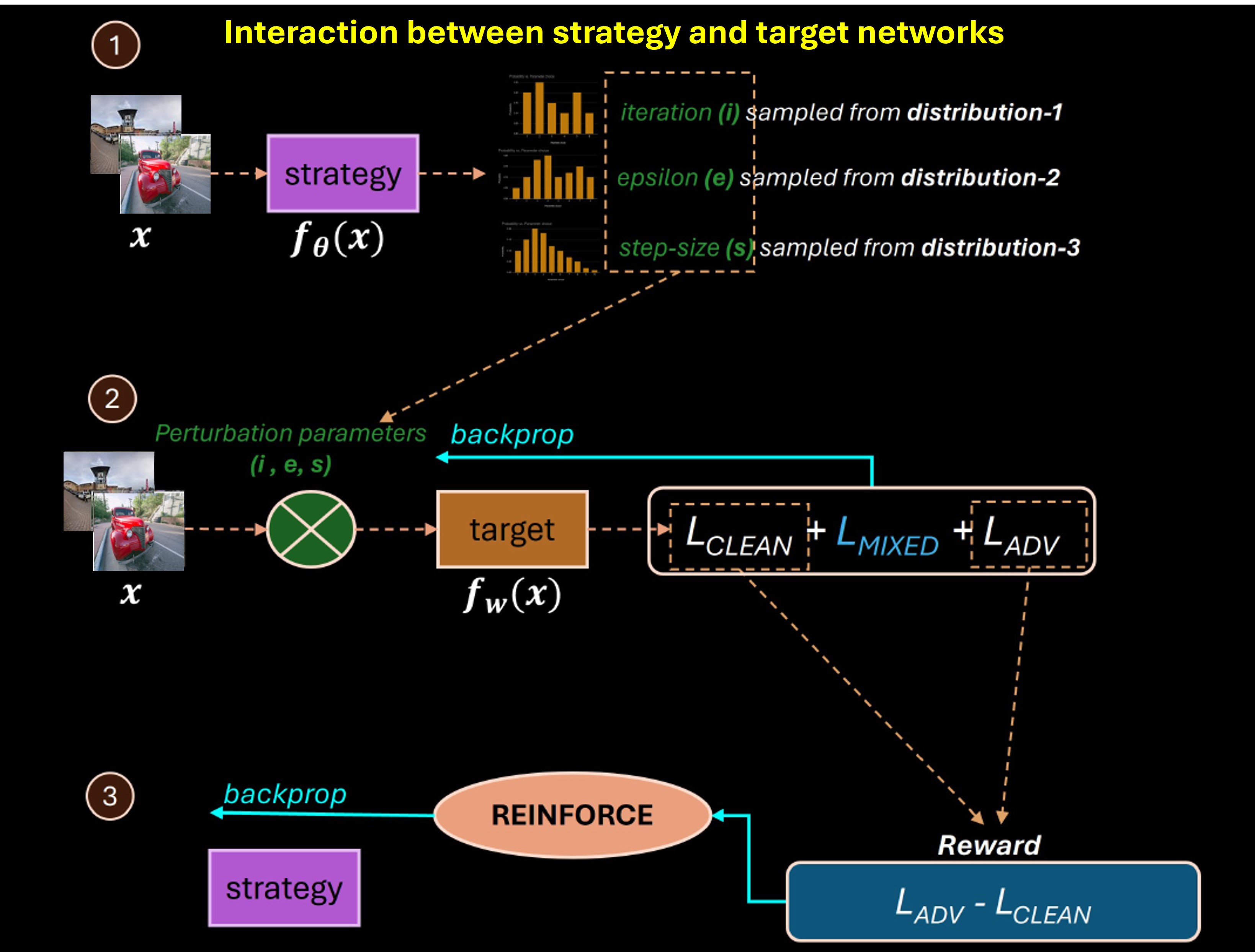

Proposed ASTrA Method ASTrA introduces a learnable adversarial attack strategy, enabling the generation of adaptive perturbations that optimize SSL representations. ASTrA employs a reinforcement learning-based framework to adaptively refine attack strategies.

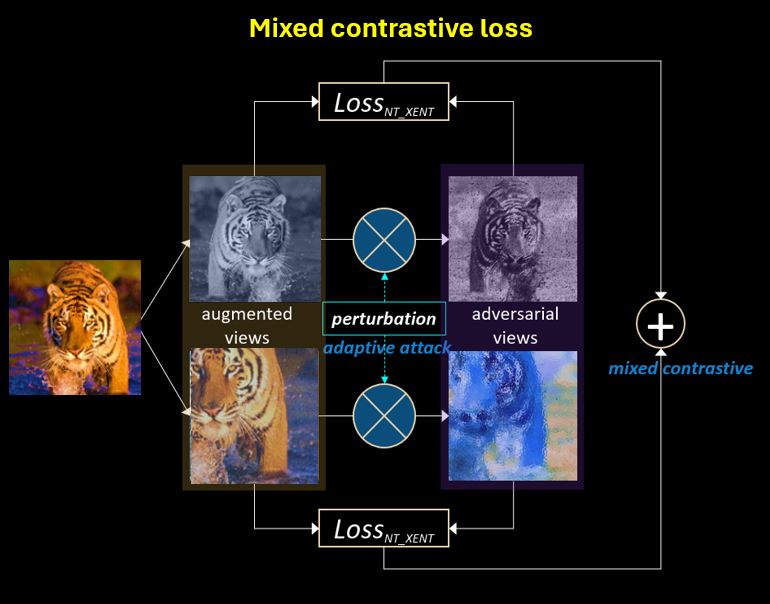

Mixed Contrastive Loss: ASTrA incorporates a mixed contrastive loss to combine clean and adversarial views for robust representation learning, resulting in robust alignment in representaiton space.

Interaction Between Strategy and Target Network: ASTrA leverages a dual-network framework where the strategy network generates perturbations, and the target network learns SSL representations.

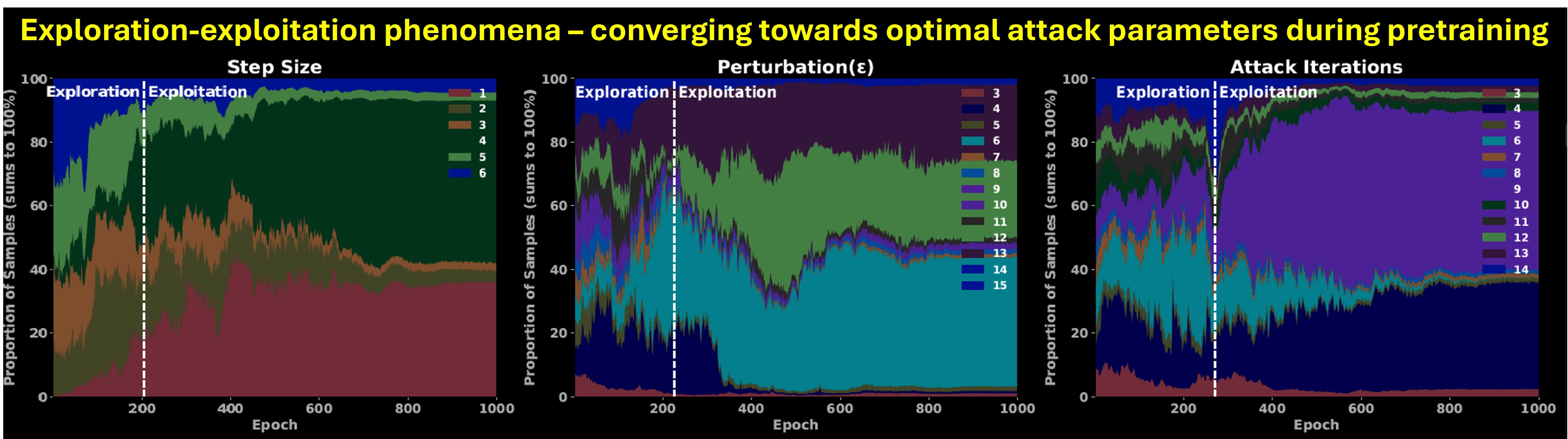

Exploration-Exploitation Phenomena: ASTrA dynamically balances exploration and exploitation during self-supervised pretraining, converging to optimal attack parameters regardless of the dataset. Rather than converging to single atomic value of attack paramters, it leaverges combination of domianant values based on learning dynamics of the model at given instance.

Results

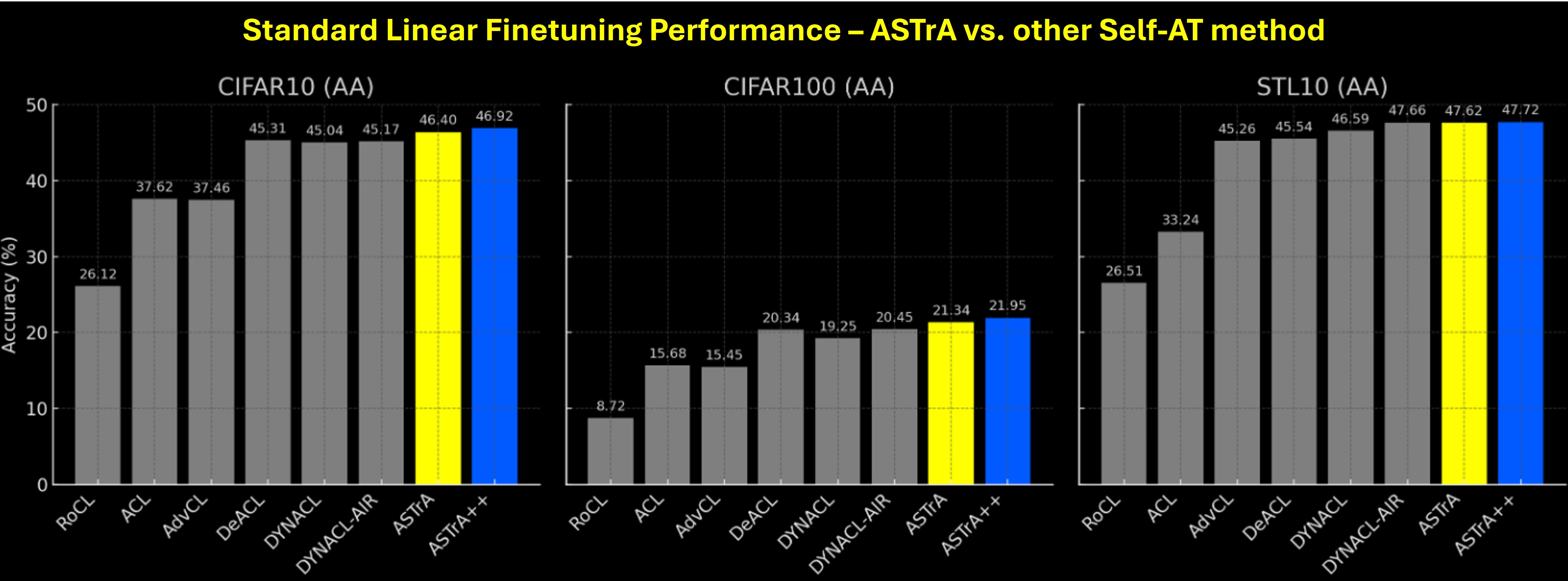

Standard Linear Finetuning Results shows ASTrA outperform other methods on several benchmarks. (more results in paper)

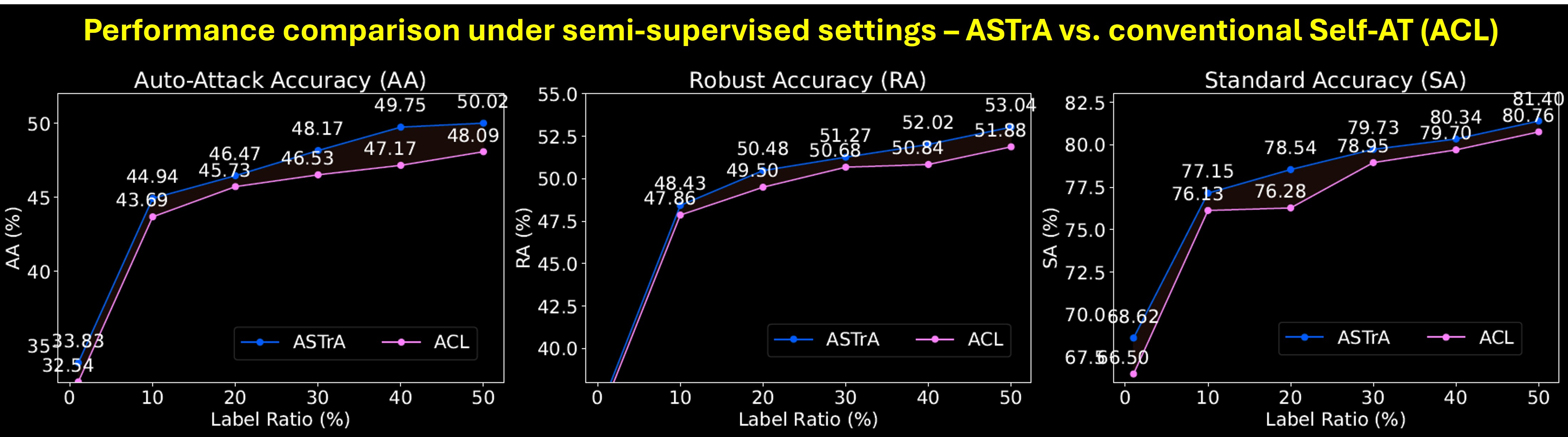

Semi-supervised Settings: ASTrA demonstrates superior performance in semi-supervised learning compared to conventional Self-AT methods.

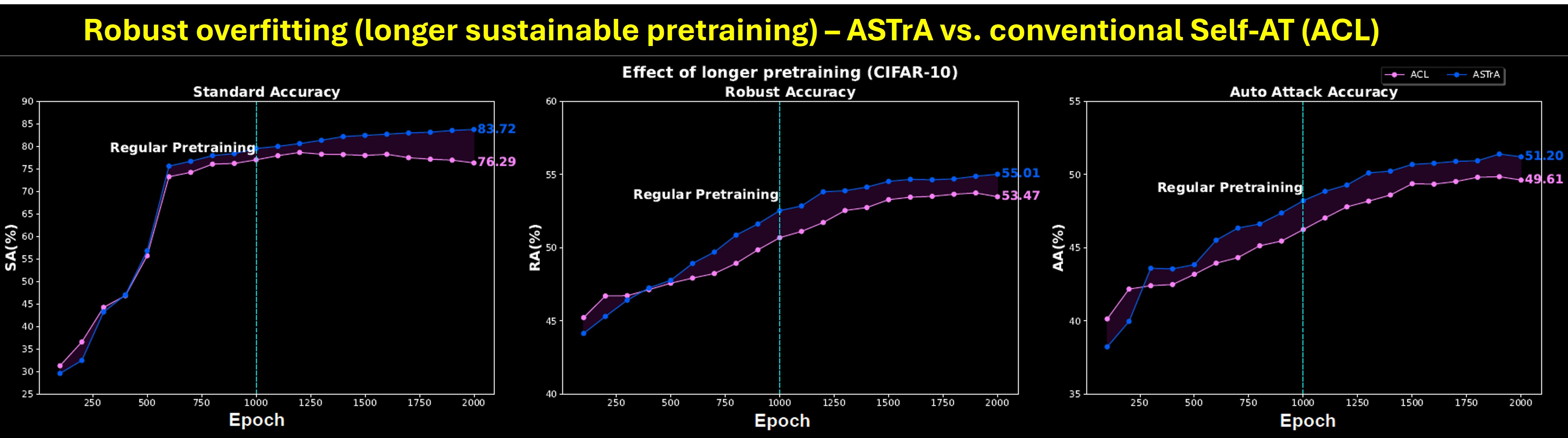

Robust Overfitting: ASTrA sustains longer pretraining epochs without overfitting, outperforming conventional methods.

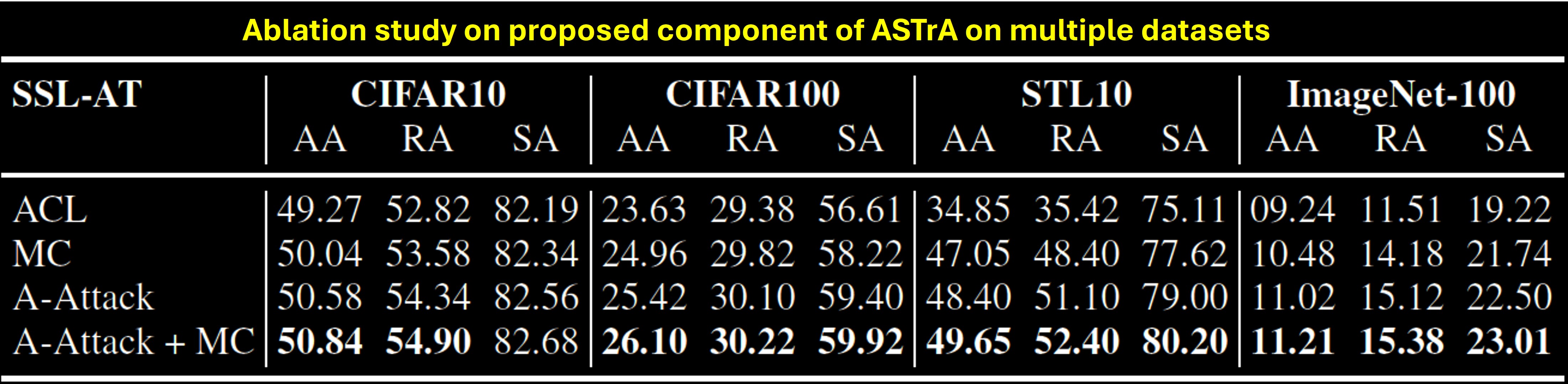

Ablation Study: Comprehensive ablation study highlights the effectiveness of ASTrA components across multiple datasets.

We encpurage reader to read the full paper and watch video for more details.

Read Paper Source Code @ Github Check Poster Watch Video @ Youtube